Why AI Agent Security Must be Intent-Based

AI agents are quickly becoming the new workforce of the modern enterprise. They write code, manage infrastructure, analyze data, resolve operational incidents, and automate business workflows. Increasingly, they interact directly with the systems that run a company’s operations, making decisions and executing actions without human intervention.

But the security model protecting them was designed for human users and legacy, deterministic software. That model is about to break. Securing AI agents requires a new model, one that starts with identity, understands intent, and enforces control.

AI Agents Break Traditional Security Assumptions

Traditional software behaves predictably. Given the same inputs, it produces the same outputs. Security models were built around that assumption, which allowed organizations to define permissions and trust those permissions to remain relatively stable over time.

AI agents are fundamentally different. They are goal-driven systems. They interpret objectives, plan actions, and adapt their behavior based on context, data, and feedback. An agent tasked with resolving an infrastructure issue might read logs, query systems, modify configurations, restart services, and open tickets.

Two agents with identical permissions may behave very differently depending on the task they are trying to accomplish. That unpredictability is exactly what makes AI agents powerful. It is also what makes them difficult to secure.

AI Becomes Dangerous When It Gets Access

For the past several years, much of the conversation around AI security has focused on large language models (LLMs). Organizations have invested heavily in prompt filtering, output moderation, and other guardrails designed to shape how AI systems respond.

Those controls matter in limited contexts, but they miss the larger shift underway. The real transformation is not chatbots answering questions; it’s AI agents taking action. The moment AI moves from generating responses to executing actions, the security problem fundamentally changes.

AI agents only truly become dangerous when they get access. Once an agent has credentials to critical systems, the security boundary has already been crossed. Guardrails may shape how the agent communicates, but they cannot prevent the consequences of what it is allowed to do.

Why Existing Security Controls Fall Short

Many organizations are attempting to secure AI agents by retrofitting approaches originally developed for human users or traditional machine workloads. These approaches rely heavily on static permissions and predefined roles.

Traditional identity and access management (IAM) systems answer a simple question: what can an identity access? For human users and predictable applications, this model works reasonably well. But AI agents rarely operate within narrow, predefined workflows. Instead, they pursue objectives that may involve interacting with many systems in sequence and adapt their behavior at runtime.

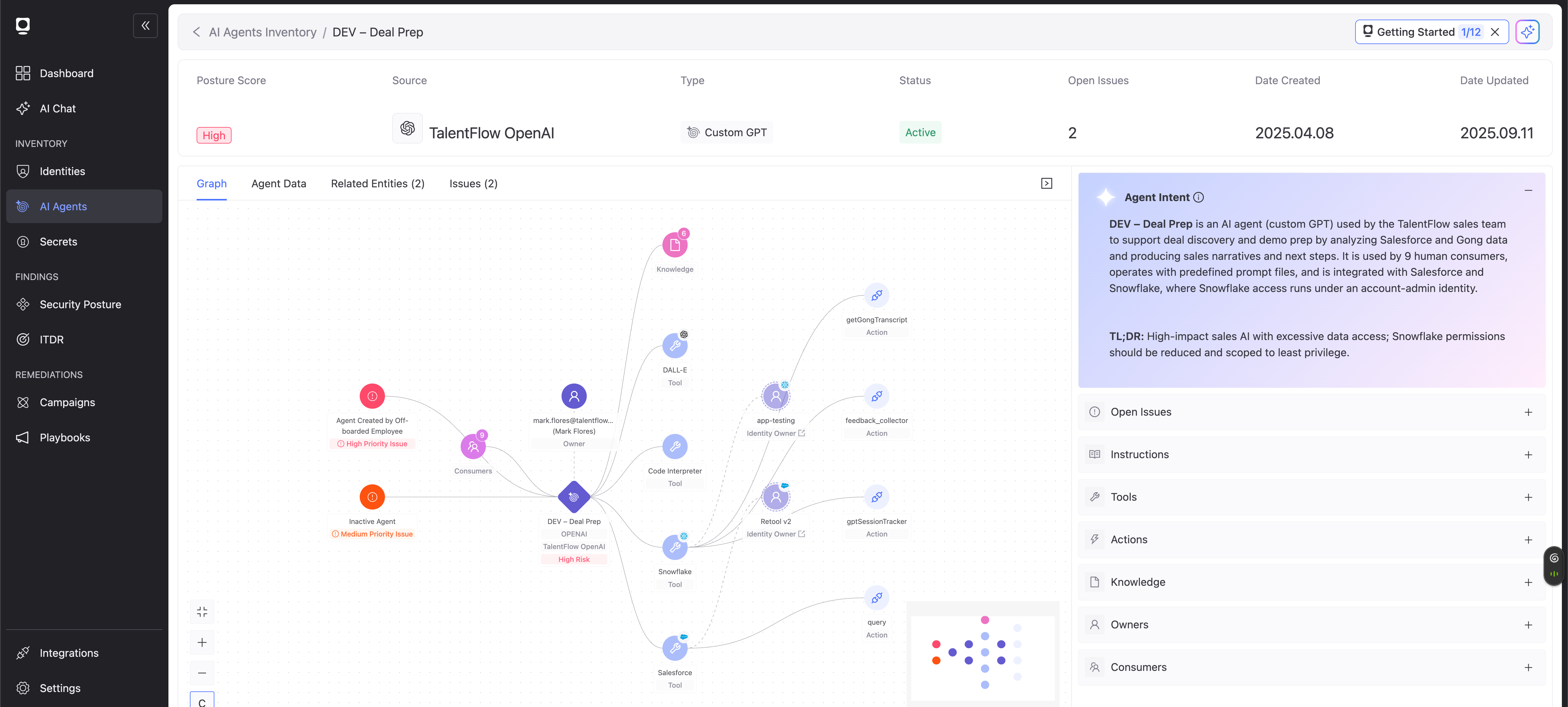

Consider a simple operational task such as resolving a failed deployment. An AI agent may read logs, query monitoring systems, modify infrastructure, create tickets, trigger new automation pipelines, and notify engineering teams. To avoid breaking workflows, developers often grant agents overly broad permissions. Over time, those permissions accumulate. Agents inherit creator-level privileges, temporary access becomes permanent, and security teams lose visibility into how those identities are actually being used.

When something goes wrong, security teams frequently cannot answer basic questions about the AI agents operating in their environments:

- Who owns the agent?

- What identities does it use?

- What is it designed to do?

- What systems can it access?

When those questions cannot be answered, governance effectively collapses.

Identity Becomes the Control Plane

Agents interact with enterprise systems through identities. They authenticate using service accounts, cloud IAM roles, API keys, and tokens. Every action an agent performs, whether reading data, modifying infrastructure, or triggering automation, ultimately depends on the permissions associated with those identities.

This makes identity the natural enforcement layer for governing autonomous systems.

If an organization wants to control what AI agents can do, the most effective place to enforce those controls is at the identity layer. Identity sits at the intersection of every system an agent touches. It determines what resources the agent can access and what actions it can perform. Identity controls access. Intent makes that access safe.

Intent Is the Missing Dimension

Traditional access control has primarily focused on actions, but AI agents are defined by purpose. An agent may be tasked with resolving infrastructure incidents, triaging cloud cost anomalies, automating customer onboarding, or coordinating software deployments. Each of these objectives may involve similar system interactions: reading logs, calling APIs, modifying configurations, or accessing databases.

From a traditional IAM perspective, those actions can appear nearly identical. But the purpose behind them is fundamentally different.

Intent-based security introduces a new layer of context: understanding what an agent is supposed to accomplish and evaluating its actions and access against that objective. Instead of asking “What can this agent do?” security teams need to start asking: “What should it be able to do to achieve its intended purpose?”

Once intent is defined, acceptable behavior becomes much clearer. Permissions can be scoped precisely to support the agent’s objective, and actions outside those boundaries become meaningful indicators of risk rather than simply anomalous events.

Security Must Adapt at Runtime

AI agents are adaptive systems. They respond to new information, change their plans, and dynamically determine the actions required to achieve their goals. This means that security controls cannot rely entirely on static permissions assigned in advance.

Intent-based security enables organizations to continuously evaluate behavior. If an agent designed to analyze data suddenly attempts to modify infrastructure or exfiltrate sensitive information, that action can be restricted immediately. This creates a feedback loop between behavior and authorization, something traditional IAM systems were never designed to support.

Lifecycle Governance Becomes Critical

AI agents are easy to create, easy to copy, and easy to forget. A developer can deploy a new agent in minutes, often as part of an experiment or automation workflow. Over time, those agents evolve, gain additional permissions, and sometimes become embedded in critical processes.

Risk rarely appears at the moment it is created; it accumulates over time. An agent that begins as a small experiment may quietly become business-critical while retaining overly broad access to enterprise systems. Without lifecycle governance, organizations lose visibility into how these agents evolve and what permissions they accumulate.

Treating AI agents as first-class identities allows organizations to apply governance practices that already exist for workforce identities: ownership assignment, access review, privilege management, and lifecycle controls.

The Security Model for Agentic AI

Securing AI agents requires a new model built around identity, intent, and lifecycle governance. Discover, Understand, and Enforce is how that model becomes operational. Organizations must be able to continuously discover AI agents across their environments and identify the identities those agents use to access systems. They must understand the intended purpose of each agent and ensure its permissions align with that purpose. From there, security controls can enforce least-privilege access, detect actions that fall outside the defined intent boundaries, and manage the lifecycle of agents as they are created, modified, and eventually retired. This approach allows organizations to deploy autonomous systems safely without sacrificing speed or innovation.

To secure autonomous AI systems, organizations must be able to:

- Discover every AI agent operating in their environment.

- Understand each agent’s intent, what it can access, and why.

- Enforce policy, continuously right-size permissions, automate remediation, and manage lifecycles.

This model reflects the three core capabilities required to secure AI agents at scale. Organizations must first discover every agent operating across the environment, then understand what those agents access and why, and finally enforce the controls needed to govern their permissions, behavior, and lifecycle. Together, these capabilities create a scalable security model for autonomous systems.

The Identity Platform for the Age of AI Agents

Every major shift in identity has created a new security category. The rise of the workforce identity created IAM and IGA platforms. The explosion of cloud workloads introduced machine (or non-human) identity management.

AI agents represent the next identity wave. Inside many organizations, AI agents are already beginning to outnumber human users. They will manage infrastructure, orchestrate workflows, interact with customers, and coordinate across systems at machine speed.

Securing how these agents access enterprise systems will become one of the most important security challenges of the next decade. Intent-based security, anchored in identity, provides the foundation for solving that problem. By discovering AI agents, understanding their purpose, and enforcing least-privilege access, organizations can safely deploy autonomous systems at scale.

This is the approach Token Security is pioneering. Because in a world of autonomous systems, security cannot rely on static permissions or prompt filters alone. It must understand intent.

Want to see what intent-based AI agent security looks like in practice? Schedule a demo of the Token Security platform today.

.gif)

.png)