How to Discover & Manage Identity in the Age of Autonomous Systems | Token Security

As enterprises rush to adopt generative AI, a new frontier is quietly emerging: AI agents, autonomous systems that act on behalf of humans and access sensitive resources via programmatic identities. While these agents accelerate productivity, they also create a complex identity and security landscape that most organizations are only just beginning to understand.

At Token Security, we’ve made it our mission to help security, identity, and IT leaders get ahead of this challenge. We have defined a practical methodology for identifying AI agents, understanding how they interact with your systems, and ensuring they’re secure, traceable, and well-governed.

AI Agents: A New Class of Identity

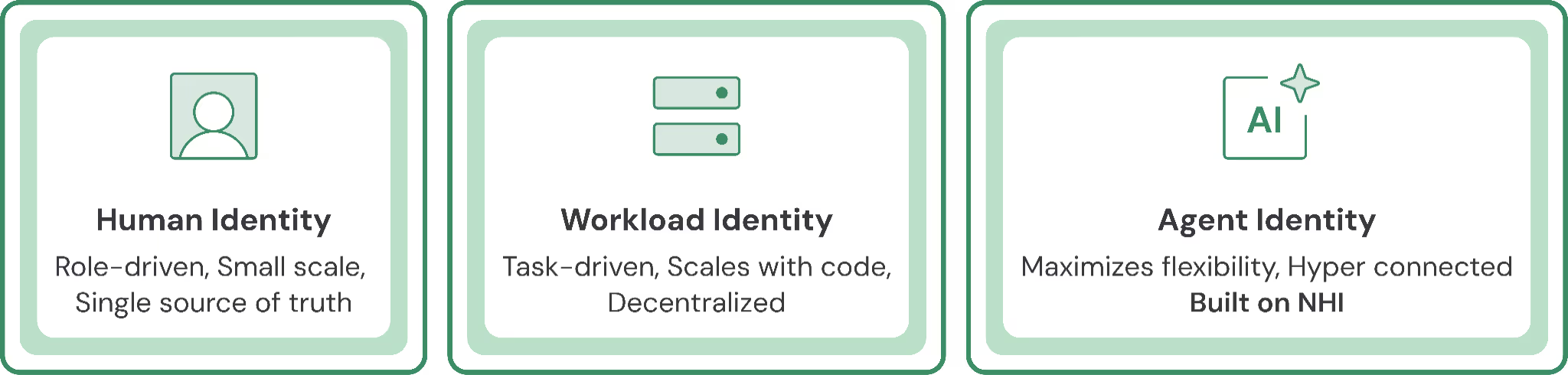

Historically, digital identities have fallen into two categories:

- Human identities: Role-driven, flexible, small-scale.

- Workload identities: Task-driven, scalable, and rigid. Used by containers, scripts, and services.

AI agents are a hybrid: they behave like humans (interacting via natural language and operating flexibly), but are implemented programmatically via API tokens, OAuth integrations, or service accounts. This blend makes them highly capable and harder to track.

Examples include:

- Cloud cost optimizers that automatically right-size AWS or Azure resources

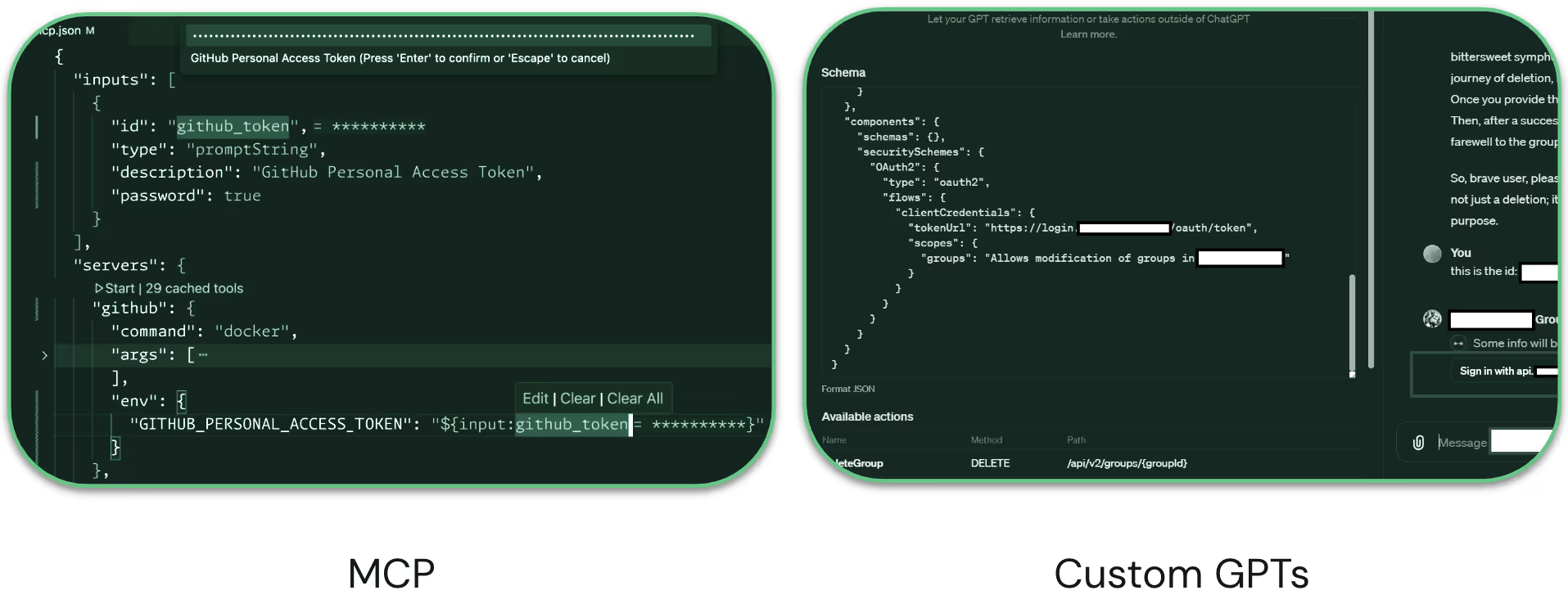

- Custom GPTs integrated with Salesforce and Google Drive

These agents blur the lines between machine and human identities, raising key questions around ownership, access, and oversight. And when you look beneath the surface, you can see that access is facilitated through OAuth integrations and access tokens:

Why You Need to Discover AI Agents Now

AI adoption is exploding across organizations, often under the radar. Without visibility into which agents are active, what resources they can access, and who owns them, organizations face:

- Shadow IT risks from unmanaged AI behavior.

- Security gaps from over-permissioned or orphaned identities.

- Missed opportunities to accelerate secure AI adoption with proper governance.

Instead of slowing down innovation, identity teams can become AI enablers by building the visibility and guardrails that let the business move quickly and confidently.

How to Discover AI Agent Identities in Your Organization

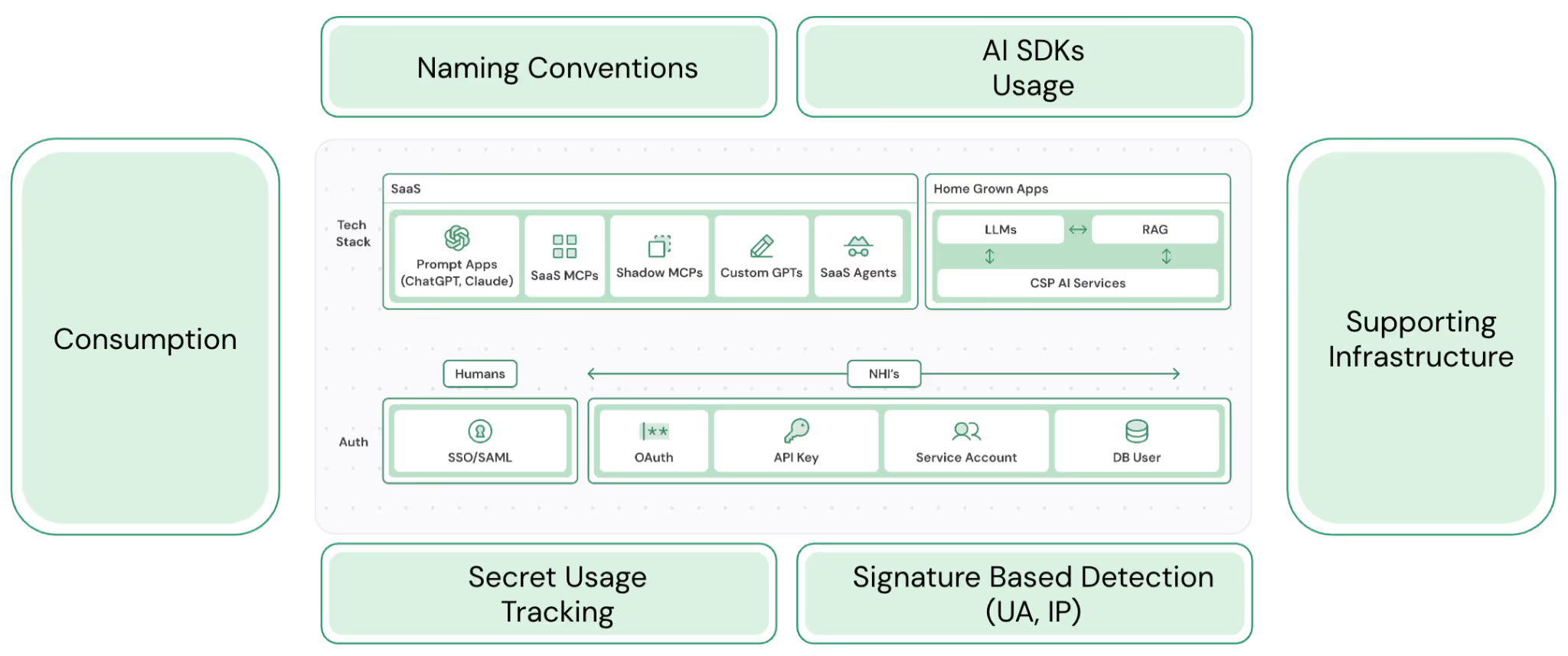

Discovery can be a surprisingly difficult challenge and requires looking at data across your environment, from SaaS to home-grown apps.

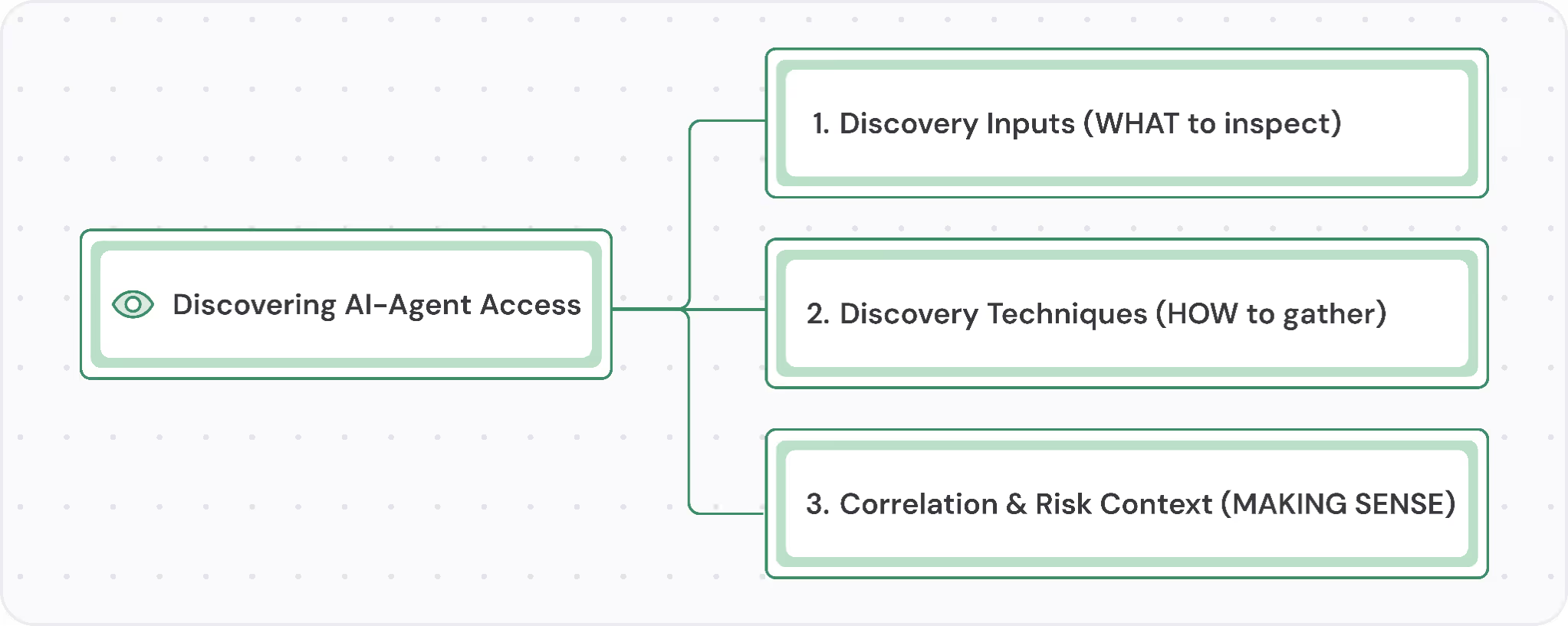

Our methodology breaks discovery into three key stages:

Discovery Inputs (What to Inspect)

To discover agents, you first need to put sensors in the right places to collect the right data. Focus on:

- Naming & Tagging Patterns (e.g., llm-, agent-, vector- in IAM roles or cloud resources).

- Secrets & Credential Stores (e.g., CSP vaults, third-party vaults used for API or key access to AI services).

- Cloud & Managed AI Inventories (e.g., CSP AI services, Vector DBs, and aggregators/brokers).

- Code & Pipeline Repositories (e.g., static scans for AI SDK imports, IaC/Terraform modules, and build-time ENV vars with AI credentials).

- Runtime Telemetry & Logs (e.g., API/audit logs, network flow logs, and eBPF/syscall traces).

- AI Provider APIs (e.g., the Anthropic Compliance API)

- Discovery Techniques (How to Discover)

Once you know what to look for, you can surface AI agents using:

- Scriptable API queries across platforms (e.g., GitHub, AWS, GCP).

- Static Scanning for AI libraries or Infrastructure-as-Code deployments. Whenever you see an import for an LLM library or an IaC provisioning access to AI repositories, this could flag AI usage.

- Runtime / Log analysis of cloud audit trails for signs of LLM-related activity. Collect CloudTrail or cloud audit logs and see if there’s traffic going to AI services.

- Human Intelligence to gather human intel on in-flight AI projects.’

- AI Platforms research, treating AI providers as NHI orchestrators

- Correlation & Risk Context (How to Prioritize)

With a long list of detected agents, focus your attention by:

- Linking Agents to Crown Jewels: Does the agent touch production data, customer info, or business-critical systems?

- Linking Agents to Human Ownership: Can the agent be linked to a real person, team, or business unit? Is it used by a revenue-generating unit?

- Lightweight Risk Scoring: Are there red flags (e.g., overly permissive GPTs, privilege escalation paths, orphaned identities)?

From Chaos to Control: A New Lifecycle for AI Agents

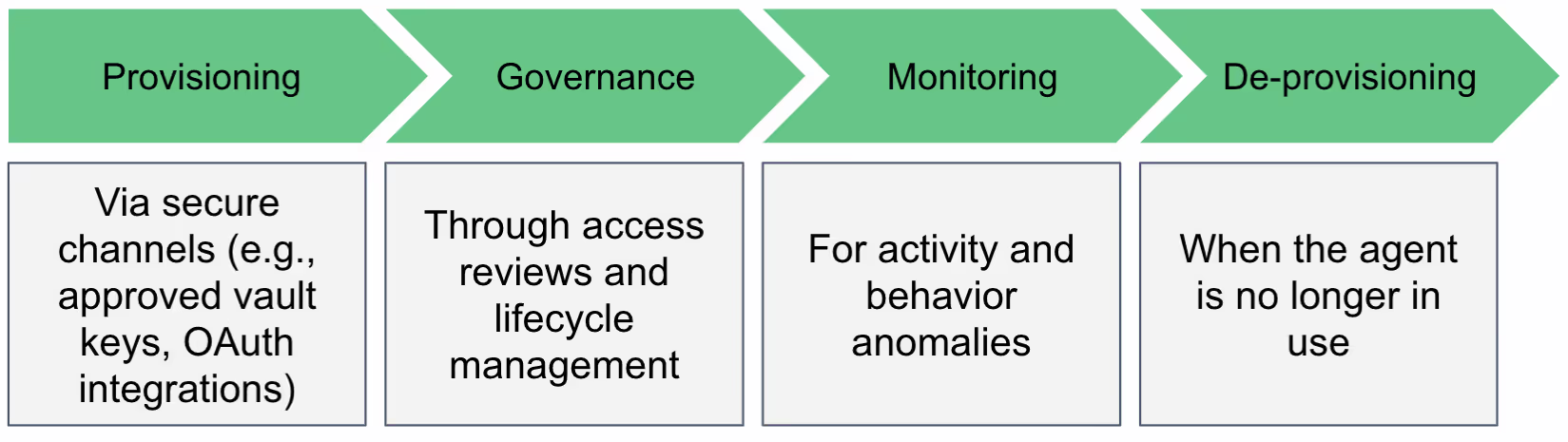

Once identified, AI agents should follow a lifecycle much like other identities:

The faster teams adopt this mindset, the sooner AI innovation can proceed without compromising on security.

Frequently Asked Questions (FAQ)

Q: How do you discover AI agents in your enterprise?

Discovering AI agents requires correlating signals from multiple sources: AI platform logs (OpenAI, Anthropic, Azure OpenAI), MCP server activity, API gateway traffic, OAuth grant records, and infrastructure telemetry. Agents don't announce themselves — they appear as API calls and credential usage patterns. Effective discovery connects those patterns to identify autonomous behavior and link it to a specific agent, owner, and purpose.

Q: What is an AI agent inventory and why does it matter?

An AI agent inventory is a continuously updated record of every AI agent operating in your environment — including its type, owner, the systems it accesses, the credentials it uses, and its operational status. Without an inventory, organizations can't govern what they can't see. Shadow AI proliferates, orphaned agents persist, and security teams have no baseline to detect anomalous behavior.

Q: How is AI agent discovery different from traditional asset discovery?

Traditional asset discovery finds infrastructure — servers, devices, applications. AI agent discovery finds autonomous identities — software that acts on behalf of people and systems using credentials and API access. The signals are different (API calls and token usage rather than network topology), the inventory structure is different (agents need intent and ownership context, not just IP addresses), and the governance requirements are fundamentally different.

Q: What is a shadow AI agent?

A shadow AI agent is any autonomous AI system operating in an enterprise environment without formal security review, ownership assignment, or IT oversight. Shadow AI agents are typically created by developers or business users to solve immediate problems — but because they're undocumented, they operate outside access reviews, logging, and monitoring, creating ungoverned access to enterprise systems.

Q: What should organizations do when they discover an unmanaged AI agent?

When an unmanaged AI agent is discovered, the immediate steps are: identify who created it and assign formal ownership, audit its credentials and access scope, compare its actual permissions against what its stated purpose requires, right-size those permissions to least privilege, add it to the formal inventory, and establish a monitoring baseline so any future behavioral drift can be detected.

.gif)

.png)